What is an acceptable failure rate of a vehicle's perception system?

And how does this influence the development and regulation of autonomous vehicles (AVs)?

These were among the key areas covered by Professor Amnon Shashua, senior vice president of Intel and chief executive officer of Mobileye at this week's CES 2021 event.

In an online session, Shashua revealed the company measures failure rate in terms of hours of driving.

“If we google, we will find out that about 3.2 trillion miles a year in the US are being travelled by cars and there are about six million crashes a year,” he said.

“So divide one by another, you get: every 500,000 miles on average there is a crash.”

“Let's assume that 50% it's your fault in a crash, so let's make this one million and let's divide this by 20 miles per hour on average, so we get about once every 50,000 hours of driving we'll have a crash,” he added.

Shashua then applied this level of performance to a scenario involving a robotic machine and the deployment of 50,000 cars.

“It would mean that every hour on average, will have an accident that is our fault because it’s a failure of the perception system,” he continued.

“From a business perspective this not sustainable, and from a society perspective, I don't see regulators approving something like this so you have to be 1,000 times better than these statistics.”

Mobileye is acutely aware of this, having just announced it will be testing AVs in new cities this year: Detroit, Tokyo, Shanghai, Paris and (pending regulation) New York City.

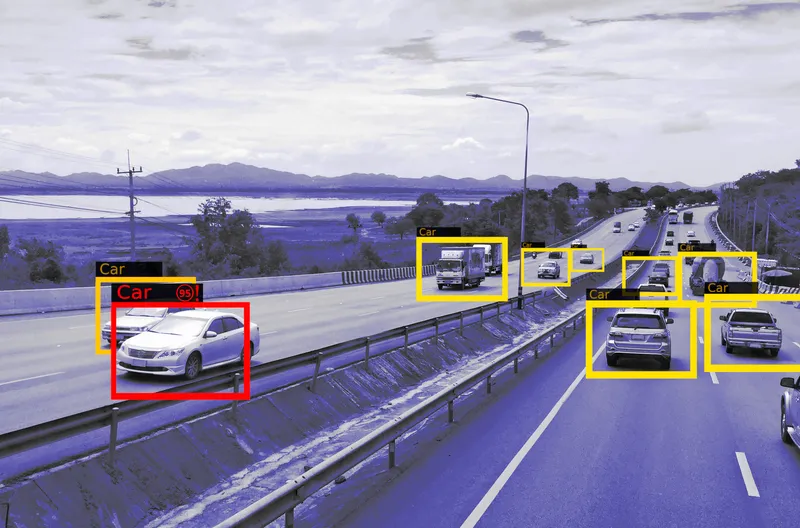

From a technological point of view, Shashua insisted it is “so crucial to do the hard work” and not combine all the sensors at the beginning and carry out a “low-level fusion – which is easy to do”.

“Forget about the radars and Lidars, solve the difficult problem of doing an end-to-end, standalone, self-contained camera-only system and then add the radars and Lidars as a redundant add-on,” he concluded.