According to the latest report from market research firm HIS, Automotive Electronics Roadmap Report, as the complexity and penetration of in-vehicle infotainment systems and advanced driver assistance systems (ADAS) increases, there is a growing need for hardware and software solutions that support artificial intelligence, which uses electronics and software to emulate the functions of the human brain. In fact, unit shipments of artificial intelligence (AI) systems used in infotainment and ADAS systems are

June 17, 2016

Read time: 3 mins

According to the latest report from market research firm HIS, Automotive Electronics Roadmap Report, as the complexity and penetration of in-vehicle infotainment systems and advanced driver assistance systems (ADAS) increases, there is a growing need for hardware and software solutions that support artificial intelligence, which uses electronics and software to emulate the functions of the human brain. In fact, unit shipments of artificial intelligence (AI) systems used in infotainment and ADAS systems are expected to rise from just 7 million in 2015 to 122 million by 2025, says IHS. The attach rate of AI-based systems in new vehicles was 8 percent in 2015, and the vast majority were focused on speech recognition. However, that number is forecast to rise to 109 percent in 2025, as there will be multiple AI systems of various types installed in many cars.

According to the report, AI-based systems in automotive applications are relatively rare, but they will grow to become standard in new vehicles over the next five years, especially in: Infotainment human-machine interface, including speech recognition, gesture recognition (including hand-writing recognition), eye tracking and driver monitoring, virtual assistance and natural language interfaces; ADAS and autonomous vehicles, including camera-based machine vision systems, radar-based detection units, driver condition evaluation, and sensor fusion engine control units (ECU).

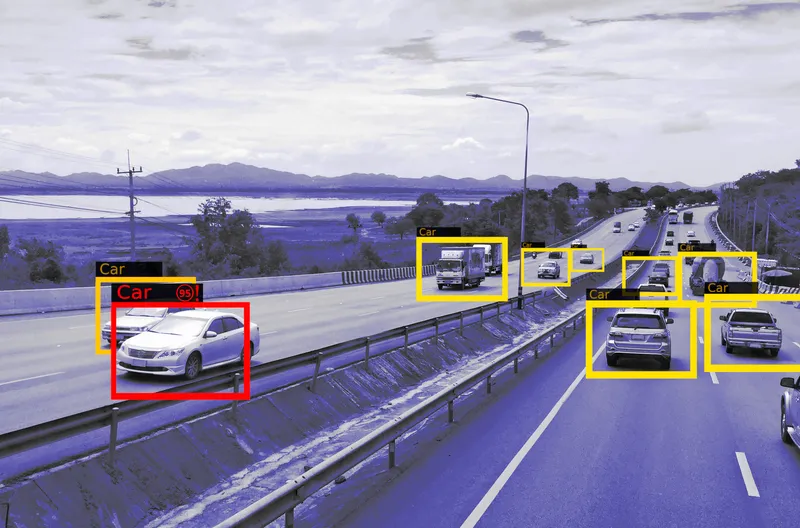

Specifically in ADAS, deep learning -- which mimics human neural networks -- presents several advantages over traditional algorithms; it is also a key milestone on the road to fully autonomous vehicles. For example, deep learning allows detection and recognition of multiple objects, improves perception, reduces power consumption, supports object classification, enables recognition and prediction of actions, and will reduce development time of ADAS systems.

The hardware required to embed AI and deep learning in safety-critical and high-performance automotive applications at mass-production volume is not currently available due to the high cost and the sheer size of the computers needed to perform these advanced tasks. Even so, elements of AI are already available in vehicles today. In the infotainment human machine interfaces currently installed, most of the speech recognition technologies already rely on algorithms based on neural networks running in the cloud. The 2015 BMW 7 Series is the first car to use a hybrid approach, offering embedded hardware able to perform voice recognition in the absence of wireless connectivity. In ADAS applications, Tesla claims to implement neural network functionality, based on the MobilEye EYEQ3 processor, in its autonomous driving control unit.

According to the report, AI-based systems in automotive applications are relatively rare, but they will grow to become standard in new vehicles over the next five years, especially in: Infotainment human-machine interface, including speech recognition, gesture recognition (including hand-writing recognition), eye tracking and driver monitoring, virtual assistance and natural language interfaces; ADAS and autonomous vehicles, including camera-based machine vision systems, radar-based detection units, driver condition evaluation, and sensor fusion engine control units (ECU).

Specifically in ADAS, deep learning -- which mimics human neural networks -- presents several advantages over traditional algorithms; it is also a key milestone on the road to fully autonomous vehicles. For example, deep learning allows detection and recognition of multiple objects, improves perception, reduces power consumption, supports object classification, enables recognition and prediction of actions, and will reduce development time of ADAS systems.

The hardware required to embed AI and deep learning in safety-critical and high-performance automotive applications at mass-production volume is not currently available due to the high cost and the sheer size of the computers needed to perform these advanced tasks. Even so, elements of AI are already available in vehicles today. In the infotainment human machine interfaces currently installed, most of the speech recognition technologies already rely on algorithms based on neural networks running in the cloud. The 2015 BMW 7 Series is the first car to use a hybrid approach, offering embedded hardware able to perform voice recognition in the absence of wireless connectivity. In ADAS applications, Tesla claims to implement neural network functionality, based on the MobilEye EYEQ3 processor, in its autonomous driving control unit.